Lots of organizations turn to service mesh because it solves tedious and complicated networking problems, especially in environments that make heavy use of microservices. It also allows them to manage application networking policies, like load balancing and traffic management policies, in a centralized place. By Stewart Reichling and Srini Polavarapu @Google.

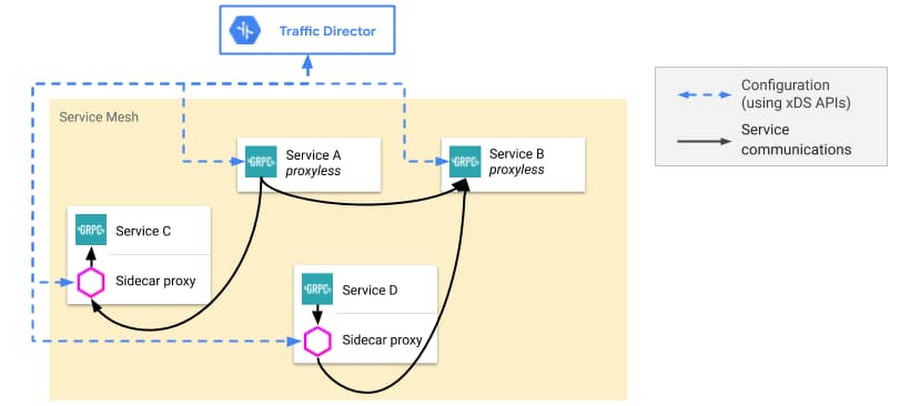

But adopting a service mesh has traditionally meant (1) managing infrastructure (a control plane), and (2) running sidecar proxies (the data plane) that handle networking on behalf of your applications.

Source: https://cloud.google.com/blog/products/networking/traffic-director-supports-proxyless-grpc

Gooogle built Traffic Director, a Google Cloud-managed control plane, to solve that first barrier to service mesh adoption—you shouldn’t need to manage yet another piece of infrastructure (the control plane). With Traffic Director support for proxyless gRPC services, you can bring proxyless gRPC applications to your proxy-based service mesh or even have a fully proxyless service mesh.

gRPC handles connection management, bidirectional streaming, and other critical networking functions. In short, it’s a great framework for building microservices-based applications.

The article then describes:

- Traffic Director support for proxyless gRPC services

- gRPC + xDS

- Getting started with proxyless gRPC

- When to deploy Traffic Director with proxyless gRPC services

Enterprise networks are heterogeneous. Google built Traffic Director to be flexible so that we can support deployment options that meet your needs. Excellent read!

[Read More]